BIO

I am currently a student at Drexel University studying Computer Science with focuses in Software Engineering, Data Structures & Algorithms, and Artificial Intelligence. My passion for development allows me to think outside of the box in order to come up with creative solutions to difficult problems - whether it's stitching concert videos together using audio fingerprinting, creating a new music interface using the Leap Motion, or making robots dance to music like humans by using Markov-Chain algorithms. All in all, I just love programming, development, and just about everything computer-related (and music, of course!)

Personal Website: markkoh.net

Education

- B.S. in Computer Science, Drexel University (Anticipated June, 2016)

- Major: Computer Science

- Minor: Software Engineering

- Concentrations: Data Structures & Algorithms, Artificial Intelligence, and Software Engineering

Projects

Automatic Audio Alignment

With the vast improvement in smartphone video technology in recent years, multiple videos of the same event (e.g.: a music concert) are becoming increasingly more common. If we are able to take a large collection of videos from an event, we could potentially allow people to relive the concert or event. By identifying precisely where videos overlap, we are able to align these videos and combine them to recreate the original experience. While it was previously possible to align videos manually, it took extensive time and effort, thus prompting us to create an automated solution for alignment.

You can see the poster for the 2015 Collonial Academic Alliance Undergraduate Research conference here.

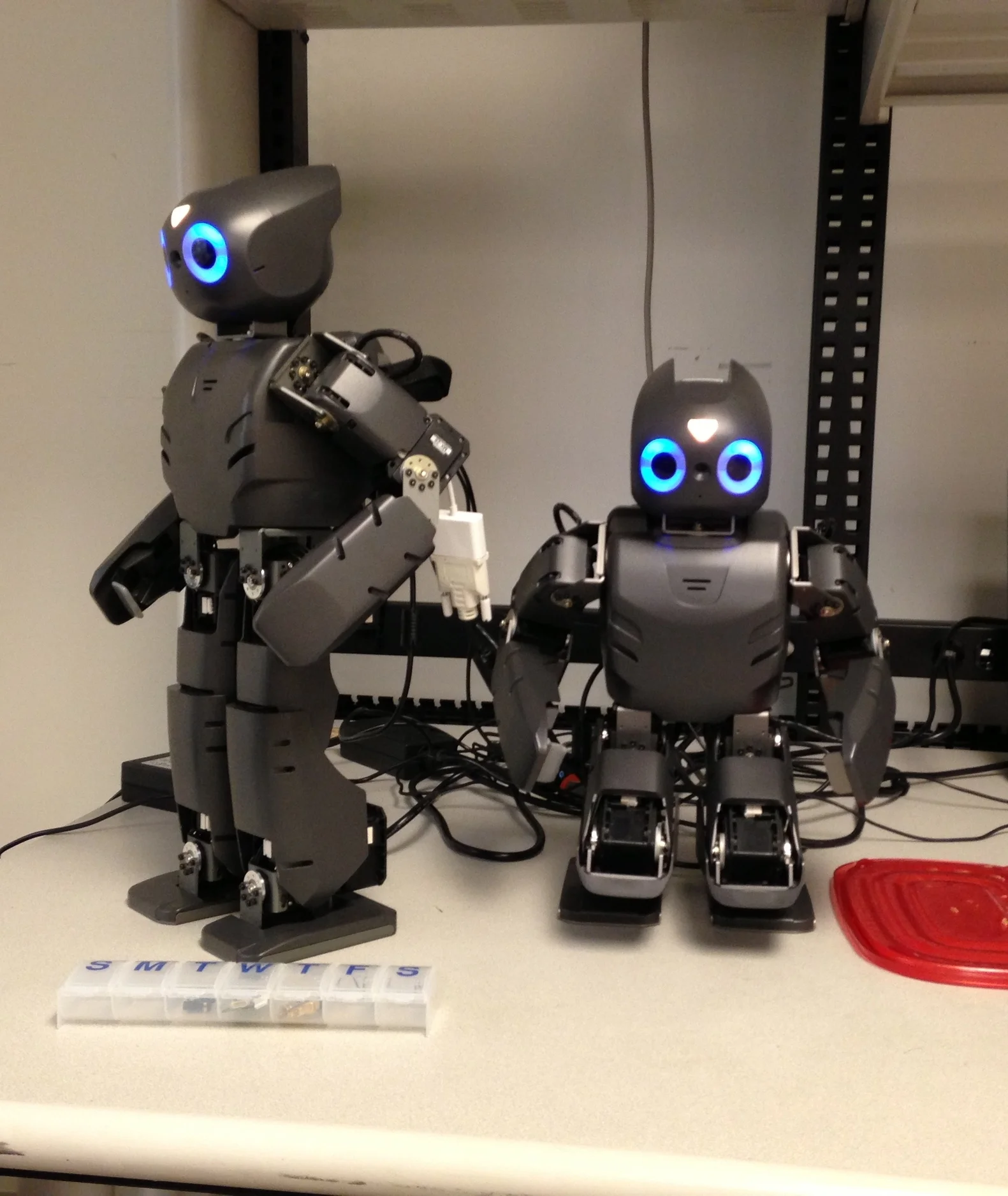

DARwIn-OP Humanoid Dance Synthesis

The DARwIn-OP (Dynamic Anthromorphic Robot with Intelligence) is a miniature humanoid robot with advanced computational power and dynamic motion abilities which allow him to move in similar ways to humans. The project that I am doing with DARwIn is focused on procedural dance generation through the use of Markov-Chain algorithms. Markov-Chain algorithms are a pattern analysis tool which are usually used to generate real-sounding text based off of a sample text. I’ve adapted this algorithm to analyze dance sequences in order to generate seemingly non-random dance performances that flow smoothly from position to another. This program also implements a Beat-Tracker developed by a MET-lab graduate student, David Grunberg, which allows DARwIn to track audio in real-time and dance to the beat.

LiveNote: Orchestra Music Companion

LiveNote is a system that helps users by guiding them through the performance using a handheld application in real-time. Using audio features, we attempt to align the live performance audio with that of a previously annotated reference recording. The aligned position is transmitted to users handheld devices and pre-annotated information about the piece is displayed synchronously with the performance.

My contribution to the project was the creation of a content management system, called the LiveNote authoring system, which allowed experts from the Orchestra to easily write their own annotations for each piece. Alongside this, the system also allowed the Orchestra to manually advance annotations in the event that the automatic detection of concert location were to fail.